Table of Contents

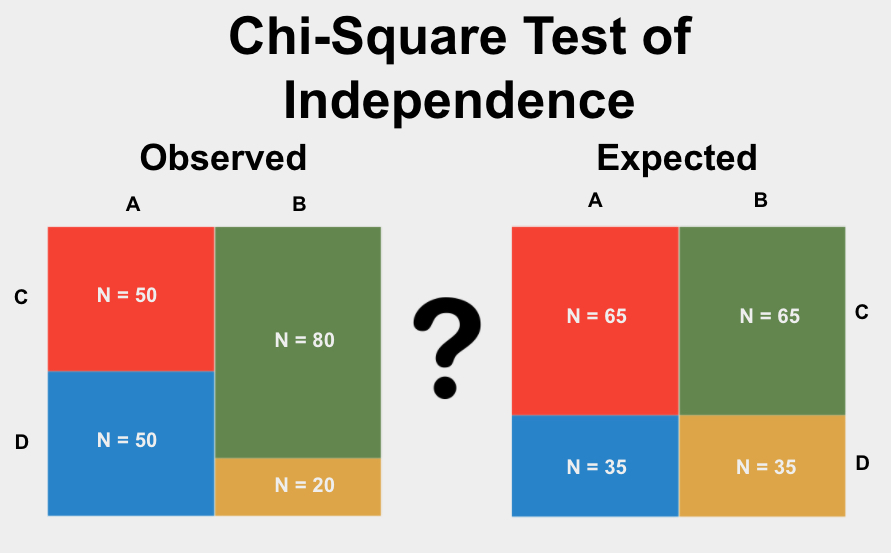

The Chi-Square Test of Independence is a statistical method used to determine whether there is a relationship between two categorical variables. It compares the observed frequencies of the two variables with the expected frequencies if there was no relationship. The test calculates a Chi-Square statistic, which is then compared to a critical value to determine if the relationship between the variables is statistically significant. This test is commonly used in fields such as psychology, sociology, and market research to analyze data and make conclusions about the relationship between different variables. It is a powerful tool for identifying patterns and trends in data and is widely used in both academic and practical settings.

What is the Chi-Square Test of Independence?

The Chi-Square Test of Independence is a statistical test used to determine if the proportions of categories in two group variables significantly differ from each other. To use this test, you should have two group variables with two or more options and you should have more than 10 values in every cell. See more below.

The Chi-Square Test of Independence is also called The Chi-Square Test of Homogeneity, Chi-Squared Test of Independence.

Assumptions for the Chi-Square Test of Independence

Every statistical method has assumptions. Assumptions mean that your data must satisfy certain properties in order for statistical method results to be accurate.

The assumptions for the Chi-Square Test of Independence include:

- Random Sample

- Independence

- Mutually exclusive groups

Let’s dive into what that means.

Random Sample

The data points for each group in your analysis must have come from a simple random sample. This is important because if your groups were not randomly determined then your analysis will be incorrect. In statistical terms this is called bias, or a tendency to have incorrect results because of bad data.

Independence

Each of your observations (data points) should be independent. This means that each value of your variables doesn’t “depend” on any of the others. For example, this assumption is usually violated when there are multiple data points over time from the same unit of observation (e.g. subject/customer/store), because the data points from the same unit of observation are likely to be related or affect one another.

Mutually Exclusive Groups

The two groups of your categorical variable should be mutually exclusive. For example, if your categorical variable is hungry (yes/no), then your groups are mutually exclusive, because one person cannot belong to both groups at once.

When to use the Chi-Square Test of Independence?

You should use the Chi-Square Test of Independence in the following scenario:

- You want to test the difference between two variables

- Your variable of interest is proportional or categorical

- You have two or more options

- You have independent samples

- You have more than 10 in every cell

Let’s clarify these to help you know when to use the Chi-Square Test of Independence.

Difference

You are looking for a statistical test to look at how a variable differs between two groups. Other types of analyses include testing for a relationship between two variables or predicting one variable using another variable (prediction).

Proportional or Categorical

For this test, your variable of interest must be proportional or categorical. A categorical variable is a variable that contains categories without a natural order. Examples of categorical variables are eye color, city of residence, type of dog, etc. Proportional variables are derived from categorical variables, for instance: the number of people that converted on two different versions of your website (10% vs 15%), percentages, the number of people who voted vs people who did not vote, the proportion of plants that died vs survived an experimental treatment, etc.

If you want to compare two continuous variables, you may want to use an Independent Samples T-Test.

Two or more Options

Your categorical variables should have two or more possible options. Some examples of variables like this are made a purchase (yes/no), color (black/white/red/etc), recovered from disease (yes/no).

If you have only two options and more than 10 in every cell, you could also consider using the Two-Proportion Z-Test.

Independent Samples

Independent samples means that your two groups are not related in any way. For example, repeated measurements from the same group over time are often not independent samples, because each observation from the same person is likely related to other samples from that person.

If you have repeated measures from a single sample, you should consider using the McNemar Test.

More than 10 in every Cell

The rule-of-thumb we recommend is to use this test when you have 10 or more observations in every cell. “Cell” in this case refers simply to the count of values in each group. For example, if I have a list of survey responses with 5 “yes” and 1 “no”, there are 5 and 1 value(s) per cell, respectively.

If you have fewer than 10 in a cell, we recommend using Fisher’s Exact Test. And if you have more than 10 in every cell and more than 1000 total observations, we recommend using the G-Test.

Chi-Square Test of Independence Example

Group: Treatment (A/B)

Variable: Recovered from disease (yes/no)

In this example, we are interested in investigating whether our two treatment groups differ significantly in rate of recovery from disease. The null hypothesis is that there is no difference between recovery rates between the two groups.

Because our variables have two or more possible values (A/B and yes/no), and our data meet all other assumptions, we know that the Chi-Square Test of Independence is appropriate to use.

The analysis will result in a probability or p-value. The p-value represents the chance of seeing our results if there was actually no difference in recovery rate between the two treatment types. A p-value less than or equal to 0.05 means that our result is statistically significant and we can trust that the difference is not due to chance alone.