Table of Contents

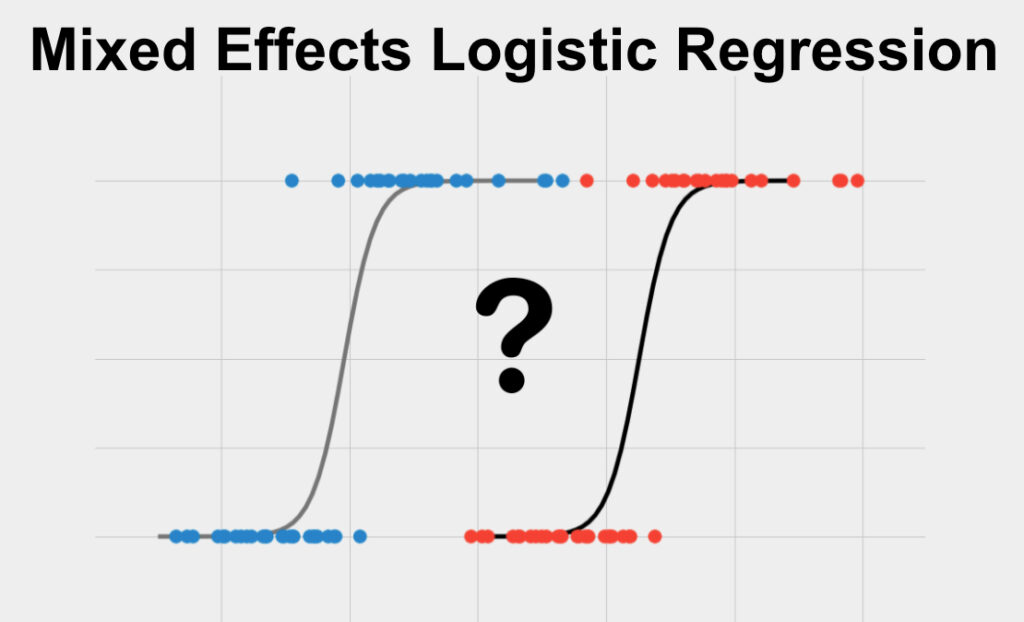

Mixed Effects Logistic Regression is a statistical method used to analyze data with both fixed and random effects. It is a type of regression analysis that takes into account both individual-level and group-level variables, allowing for a more comprehensive understanding of the relationship between the independent and dependent variables. This method is commonly used in social and behavioral sciences, as well as in medical research, to study the impact of various factors on a binary outcome. By incorporating both fixed and random effects, Mixed Effects Logistic Regression can provide more accurate and robust results compared to traditional logistic regression models.

What is Mixed Effects Logistic Regression?

Mixed EffectsLogistic Regression is a statistical test used to predict a single binary variable using one or more other variables. It also is used to determine the numerical relationship between such a set of variables. The variable you want to predict should be binary and your data should meet the other assumptions listed below.

Mixed Effects Logistic Regression is sometimes also called Repeated Measures Logistic Regression, Multilevel Logistic Regression and Multilevel Binary Logistic Regression .

Assumptions for Mixed Effects Logistic Regression

Every statistical method has assumptions. Assumptions mean that your data must satisfy certain properties in order for statistical method results to be accurate.

The assumptions for Mixed Effects Logistic Regression include:

- Linearity

- No Outliers

- No Multicollinearity

Let’s dive in to each one of these separately.

Linearity

Logistic regression fits a logistic curve to binary data. This logistic curve can be interpreted as the probability associated with each outcome across independent variable values. Logistic regression assumes that the relationship between the natural log of these probabilities (when expressed as odds) and your predictor variable is linear.

No Outliers

The variables that you care about must not contain outliers. Logistic Regression is sensitive to outliers, or data points that have unusually large or small values. You can tell if your variables have outliers by plotting them and observing if any points are far from all other points.

No Multicollinearity

Multicollinearity refers to the scenario when two or more of the independent variables are substantially correlated amongst each other. When multicollinearity is present, the regression coefficients and statistical significance become unstable and less trustworthy, though it doesn’t affect how well the model fits the data per se.

When to use Mixed Effects Logistic Regression?

You should use Mixed Effects Logistic Regression in the following scenario:

- You want to use one or more variables in a prediction of another, or you want to quantify the numerical relationship between these variables

- The variable you want to predict (your dependent variable) is binary

- You have one or more independent variable, or variable(s) that you are using as a predictor

- Your data contain repeated measures from the same sample or have a clustered or hierarchical structure

Let’s clarify these to help you know when to use Mixed Effects Logistic Regression

Prediction

You are looking for a statistical test to predict one variable using another. This is a prediction question. Other types of analyses include examining the strength of the relationship between two variables (correlation) or examining differences between groups (difference).

Binary Dependent Variable

The variable you want to predict must be binary. Binary data have only two possible values. Some examples of binary data include: true/false, purchased the product or not, has the disease or not, etc.

Types of data that are NOT binary include ordered data (such as finishing place in a race, best business rankings, etc.), categorical data (gender, eye color, race, etc.), or continuous data (height, income, etc.).

If your dependent variable is continuous, you should use Multiple Linear Regression, and if your dependent variable is categorical, then you should use Multinomial Logistic Regression or Linear Discriminant Analysis.

More than One Independent Variable

Multiple Logistic Regression is used when there is one or more predictor variables, sometimes measured at multiple points in time.

If you have only one independent variable, then you should use Simple Logistic Regression.

Repeated Measures

This method is suited for the scenario when there are multiple observations for each unit of observation. The unit of observation is what composes a “data point”, for example, a store, a customer, a city, etc… This scenario also encapsulates when there is a hierarchical or clustered nature to the data. For example, if each data point is a store, then this model could account for the clustering of those stores by state or region.

If you have one or more independent variables, but they are measured at only a single point in time or are without clustering or hierarchy, then you should use Multiple Logistic Regression.

Mixed Effects Logistic Regression Example

Dependent Variable: Purchase made (Yes/No)

Independent Variable 1: Time spent (in store or on website)

Note: (Data contain repeated measures over time for consumers)

The null hypothesis, which is statistical lingo for what would happen if the treatment does nothing, is that there is no relationship between time spent and whether or not a purchase is made. Our test will assess the likelihood of this hypothesis being true.

We gather our data and after assuring that the assumptions of logistic regression are met, we perform the analysis.

When we run this analysis, we get coefficients for each term in the model. The coefficients for our independent variable, time spent, is the expected increase/decrease in the log odds of our outcome variable for each unit increase in that variable.

In addition, this analysis will result in an accuracy measure. Accuracy is the proportion of the binary outcome variable that is correctly predicting using the logistic regression model. In this example, the accuracy would be the number of customers that the model correctly identified as making a purchase or not.