Table of Contents

The Pearson Correlation coefficient, often denoted as Pearson’s r, is a foundational statistical tool used to quantify the strength and determine the direction of the linear association between two sets of numerical data. This powerful metric yields a value ranging strictly between -1 and 1. A coefficient of 1 signifies a perfect positive correlation—meaning as one variable increases, the other increases proportionally. Conversely, a value of -1 denotes a perfect negative (or inverse) correlation. If the resulting coefficient is 0, it suggests the absence of any linear relationship between the variables being analyzed. The calculation of Pearson’s r is critical in quantitative research and data analysis, providing objective insights into how different variables interact. Visualizing this relationship is typically achieved through a scatter plot, which helps confirm the necessary assumption of linearity before computation.

Defining the Pearson Correlation Coefficient

The Pearson Correlation (or Pearson Product-Moment Correlation Coefficient, PPMCC) serves as the benchmark for measuring the linear dependence between two datasets, X and Y. It is one of the most frequently employed statistical procedures because of its intuitive interpretation and robust application across various fields, including finance, psychology, and engineering. When we analyze data using Pearson’s r, we are specifically testing whether an increase in one variable corresponds to a predictable increase or decrease in the other, assuming this relationship can be best modeled by a straight line.

The validity and reliability of the Pearson Correlation depend heavily on fulfilling specific statistical requirements. Before calculating the coefficient, researchers must ensure their variables are continuous variables, exhibit a normal distribution, display a clear linear relationship, and are free from severe outliers. Furthermore, the variability of the data points around the regression line should remain relatively constant across the range of the variables—a condition known as homoscedasticity. Failing to meet these crucial assumptions necessitates the use of non-parametric alternatives.

The Pearson Correlation is also commonly referred to by several names, including Pearson’s r, the Pearson product-moment correlation coefficient (PPMCC), and bivariate correlation.

Critical Assumptions Governing Pearson Correlation

All parametric statistical tests, including the Pearson Correlation, rely on a specific set of underlying assumptions about the data distribution and structure. These assumptions are not merely suggestions; they are prerequisites that must be met to ensure the statistical results are valid, unbiased, and generalizable. If the data significantly violate one or more of these core properties, the resulting correlation coefficient may be misleading, necessitating the use of alternative non-parametric measures.

Understanding and verifying these prerequisites is arguably the most crucial step prior to calculation. Data validation procedures typically involve exploratory data analysis techniques such as histograms, scatter plots, and formal statistical tests (e.g., Shapiro-Wilk test for normality). The five fundamental assumptions required for the accurate application of Pearson’s r are outlined below, providing a clear framework for data preparation:

- The variables must be measured at the Continuous level.

- The variables should exhibit Normal Distribution.

- The relationship between the variables must be Linear.

- The dataset must contain No Outliers or have minimal influence from them.

- The data must satisfy Homoscedasticity (Similar Spread Across Range).

We will now explore each of these indispensable assumptions in detail, providing context and guidance on how to assess their fulfillment in your dataset.

Data Requirement: Continuous Variables

For Pearson’s correlation coefficient to be applicable, both variables under investigation must be measured on a continuous scale. A continuous variable is one that can theoretically take on any value within a given range, including decimals and fractions, rather than being restricted to discrete, countable whole numbers or categories. Examples of such variables often include physical measurements like height, weight, time, and temperature, as well as calculated metrics like standardized test scores or extensive psychological survey totals.

The requirement for continuous measurement is fundamental because Pearson’s r relies on calculating the means and standard deviations of the data, operations that are only mathematically meaningful for interval or ratio data. If your data are inherently nominal (e.g., gender, eye color) or ordinal (e.g., ranking systems, satisfaction levels), the basic arithmetic properties required for Pearson’s r are violated, leading to inaccurate conclusions regarding the strength of the relationship.

If your variables of interest are categorical, you should use the Phi Coefficient or Cramer’s V instead. If one of your variables is continuous and the other is binary, you should use Point Biserial. If your variables are ordinal, you should use Spearman’s Rho or Kendall’s Tau.

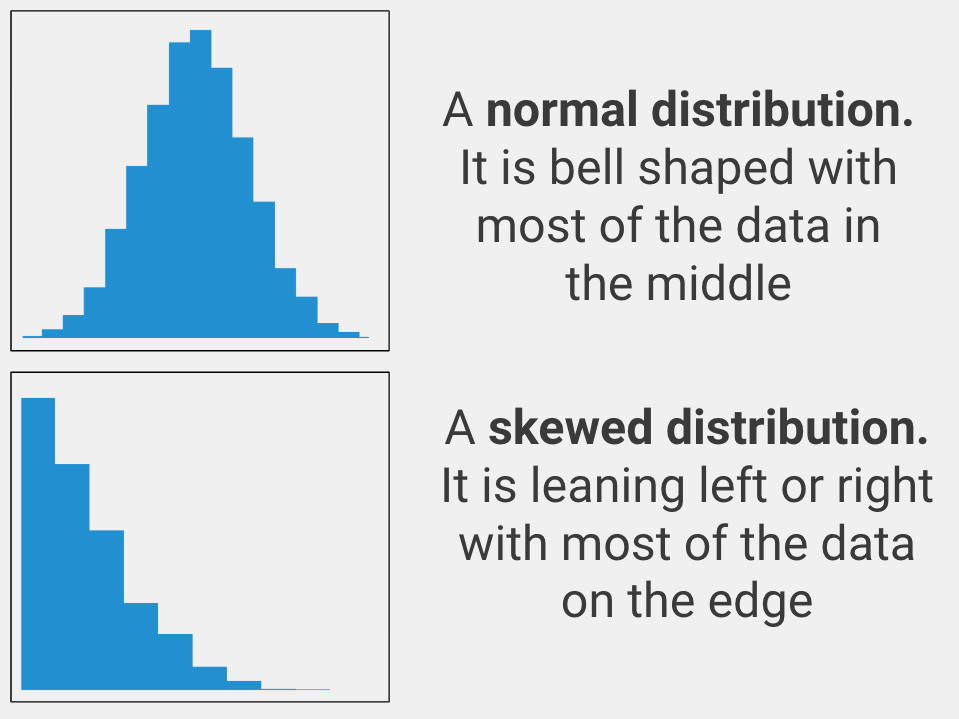

Distributional Assumption: Normality

A primary assumption for conducting inferential statistics based on Pearson’s r is that both continuous variables are approximately normally distributed. A normal distribution is characterized by the classic bell-shaped curve, where the majority of the data points cluster around the mean, and the distribution is symmetrical. While Pearson’s correlation coefficient itself is a descriptive statistic and is relatively robust to minor deviations from normality, the assumption is critical for the accurate calculation of the p-value and the subsequent hypothesis testing, particularly in smaller sample sizes.

Deviation from normality, often manifesting as skewness (data leaning heavily to one side) or kurtosis (too peaked or too flat), can severely distort the standard error of the correlation coefficient. Therefore, researchers must visually inspect histograms or Q-Q plots, or utilize formal statistical tests like the Kolmogorov-Smirnov or Shapiro-Wilk test, to confirm that the data approximates a normal distribution. Severe violations can lead to incorrect conclusions regarding the statistical significance of the observed relationship.

If your variable is not normally distributed, the appropriate alternative non-parametric methods are Spearman’s Rho or Kendall’s Tau. These methods rely on ranking the data rather than using the raw values, thus bypassing the requirement for parametric assumptions.

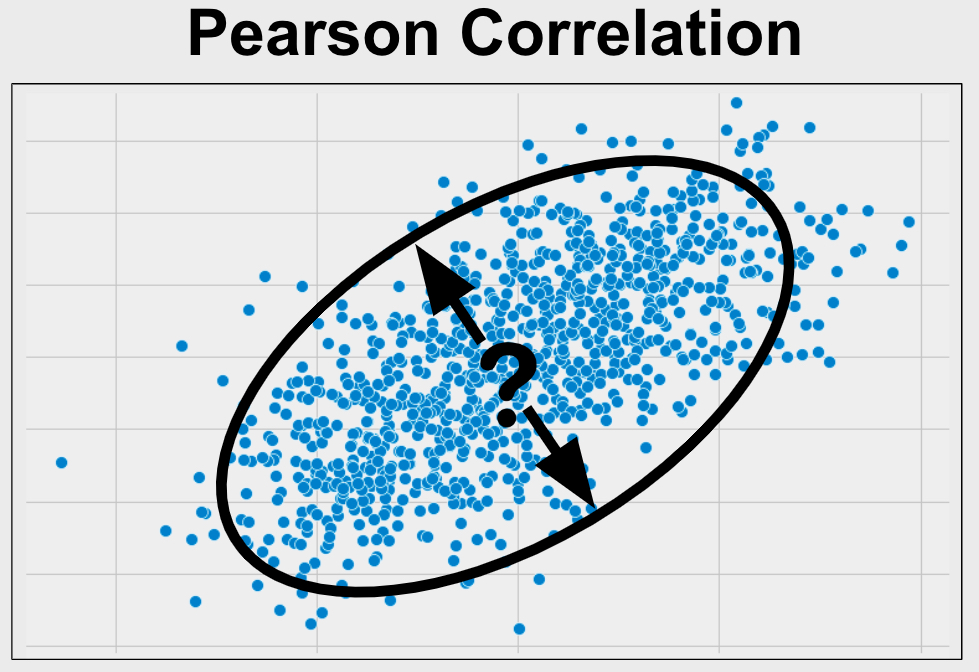

Relationship Form: Linearity

The core mathematical premise of the Pearson Correlation coefficient is that it exclusively measures the strength of the linear relationship between two variables. If the true relationship between X and Y is curvilinear (e.g., U-shaped, exponential, or logarithmic), Pearson’s r will significantly underestimate the true relationship or may even incorrectly suggest no relationship exists (a value close to zero), even when a strong association is present.

To verify the linearity assumption, it is mandatory to generate a scatter plot of the data. If the cloud of data points generally follows a straight line pattern—meaning a single straight line could reasonably pass through the center of the data—then the assumption of linearity is satisfied. Conversely, if the data points appear curved, clustered, or randomly scattered without a discernible straight-line pattern, the use of Pearson’s r is inappropriate.

If you do not have linear variables then you should use Spearman’s Rho or Kendall’s Tau instead.

Robustness Check: Absence of Outliers

Pearson’s correlation coefficient is highly susceptible to the influence of outliers. An outlier is defined as a data point that deviates significantly from other observations, potentially skewing the mean and variance calculations upon which Pearson’s r is built. A single extreme data point, particularly one that is far from the general pattern of the data (often termed an influential observation), can drastically inflate or deflate the resulting correlation coefficient, leading to inaccurate conclusions about the population relationship.

Identifying outliers is best achieved visually through box plots or scatter plots, where such points will appear distinctly separated from the main cluster of data. While the decision to remove an outlier must be approached cautiously and ideally justified by knowing the data error (e.g., typo during entry), strategies like Winsorizing or transformation can mitigate their impact. Alternatively, robust statistical methods that are less sensitive to extreme values should be considered.

If your variables do have outliers, you can remove them and use Pearson’s correlation or leave them in and use Spearman’s Rho or Kendall’s Tau instead.

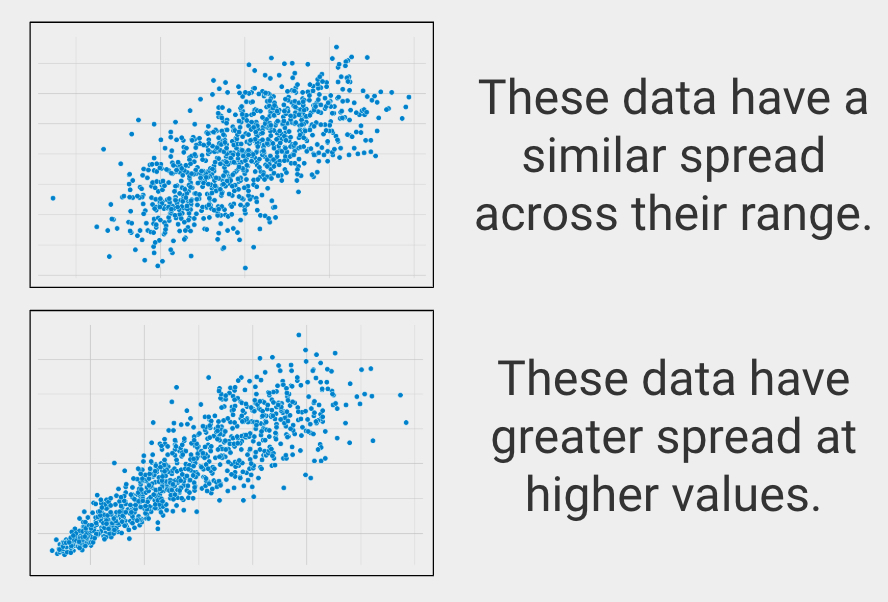

Variance Consistency: Homoscedasticity

The final assumption relates to the consistency of variance, known formally as homoscedasticity. This term translates literally to “similar scatter” and requires that the variance of the residuals (the errors or deviations from the linear line of best fit) remains roughly equal across all levels of the independent variable. In simpler terms, when visualizing the data on a scatter plot, the spread of the data points around the regression line should look uniform—like a cigar shape rather than a cone shape.

Violation of this assumption, known as heteroscedasticity, occurs when the variance of the dependent variable differs across the range of the independent variable (e.g., the data points are tightly clustered at low values of X but widely spread out at high values of X). While Pearson’s r can still estimate the correlation in the presence of heteroscedasticity, the standard errors used for hypothesis testing become unreliable, potentially leading to inaccurate p-value calculations and inflated Type I error rates.

If your groups have a substantially different spread on your variable of interest, then you should use Spearman’s Rho or Kendall’s Tau instead.

Determining Applicability: When to Employ Pearson’s r

The decision to use the Pearson Correlation is guided by a clear set of research objectives and data characteristics. This method is fundamentally designed for exploring simple, bivariate linear relationships. It is the ideal choice when your research goal is specifically descriptive and predictive, aiming to understand the degree to which two variables move together without implying causality.

You should confidently proceed with a Pearson correlation analysis when the following conditions align with your research design and data properties:

- Your primary analytical goal is to establish the strength and direction of the relationship between two variables.

- Both variables involved are measured at the continuous (interval or ratio) level.

- You are analyzing a bivariate relationship (only two variables) and have not included any additional covariates whose influence needs to be statistically controlled.

To ensure clarity, we will elaborate on these essential conditions, distinguishing correlation from other forms of statistical inquiry like difference testing or complex prediction modeling.

Focus on Association, Not Causation or Difference

The core function of Pearson correlation is to quantify association. Researchers use this test when they are specifically interested in how two variables relate to one another—that is, whether they trend positively together or inversely against each other. It is crucial to remember that correlation does not imply causation; it merely measures co-occurrence. This objective fundamentally differs from tests of difference (e.g., t-tests or ANOVA), which assess whether the means of two or more groups are statistically distinct.

Furthermore, while a strong correlation is a prerequisite for prediction models, Pearson’s r itself is not a prediction tool. Prediction analysis, such as linear regression, uses the established correlation to forecast the value of a dependent variable based on the value of an independent variable, generating a predictive equation. Correlation, however, only summarizes the shared variability between the two measures.

Data Scale Requirement

As previously emphasized under the assumptions, the data scale is non-negotiable for Pearson’s r. Both variables must be continuous variables, meaning they are measured at the interval or ratio level, allowing for meaningful calculations of means and variances. Examples include quantifiable physical traits (e.g., age, blood pressure, laboratory measurements) or psychological constructs measured via standardized, validated scales.

The inability of Pearson’s r to handle non-continuous data stems from the fact that it calculates the covariance between two sets of raw scores. Data that fall into categories (e.g., nominal data like geographical region or binary data like success/failure) or data that are merely ordered without equal intervals between ranks (ordinal data) cannot satisfy the mathematical requirements of this parametric test. Using an inappropriate test on such data will yield coefficients that are statistically nonsensical.

Bivariate Constraint

Pearson Correlation is strictly a bivariate analysis; it is designed to measure the linear association between precisely two variables. While you might calculate multiple pairwise correlations within a larger dataset (a correlation matrix), each resulting coefficient (r) quantifies only the relationship between that specific pair of variables. It is not designed to handle multivariate relationships where the interaction of several independent variables predicts a single dependent variable.

For research questions involving three or more variables simultaneously—such as determining the underlying structure of a large set of variables or grouping observations based on shared traits—more sophisticated multivariate techniques are required. These methods move beyond simple pairwise association to model complex data structures.

If you have three or more groups, you could considering clustering methods instead.

Exclusion of Controlling Variables (Covariates)

Standard Pearson Correlation assumes that the relationship being observed is the direct and unmediated link between the two primary variables of interest. It does not account for the potential influence of third variables, often referred to as covariates, confounding variables, or control variables. A covariate is a variable measured alongside the primary variables that may systematically influence their relationship. For example, when examining the correlation between exercise time and resting heart rate, factors like age or smoking status are likely covariates that need consideration.

If a researcher suspects that a third variable might be artificially inflating, reducing, or even creating the observed correlation, it is essential to statistically control for its effect. Ignoring a significant covariate leads to a spurious correlation, where the observed relationship is misleading because it is actually driven by the unmeasured third factor.

If you do have one or more covariates, you should use Partial Correlation instead.

Interpreting Results: A Height and Weight Example

Consider a classic example in biometrics where we seek to determine the relationship between physical stature and mass. Our two continuous variables are: Variable 1: Height (measured in centimeters) and Variable 2: Weight (measured in kilograms). The objective is to quantify the degree to which individuals who are taller tend to weigh more or less than average. To test this, data is systematically collected from a representative sample of participants.

Crucially, prior to initiating the Pearson Correlation calculation, the researcher must perform meticulous assumption checking. This involves generating scatter plots to confirm linearity, using formal tests to verify normal distribution of both variables, and scrutinizing box plots to ensure the absence of influential outliers. Only once these preconditions are met can the analysis proceed, resulting in two primary statistical outputs: the correlation coefficient (Pearson’s r) and the statistical significance (p-value).

The resulting correlation coefficient, r, dictates both the strength and direction of the linear relationship. If the result is, for instance, r = 0.75, this indicates a strong positive linear relationship: as height increases, weight tends to increase substantially. Conversely, a value such as r = -0.30 suggests a weak inverse relationship. The closer r is to 1 or -1, the stronger the predictive linear link between the two variables.

The associated p-value determines the statistical significance of the observed correlation. This probability represents the chance of observing a correlation coefficient as extreme as, or more extreme than, the one calculated, assuming that the null hypothesis (i.e., that the true population correlation is zero) is correct. Standard practice dictates that if the p-value is less than or equal to 0.05, the correlation is deemed statistically significant. This significance threshold provides confidence that the relationship observed in the sample is genuine and not merely a result of random sampling fluctuation.